The RBFNN is three layered feed-forward neural network. This algorithm is very good for clustering also because does not require a priori selection of the number of cluster (in k-mean you need to choose k, here no).A RBFNN is an artificial neural network that uses radial basis functions as activation functions. You can find a very nice python package called somoclu which has got this algorithm implemented and an easy way to visualize the result.

Self-organizing map this is a clustering algorithm based on Neural Networks which create a discretized representation of the input space of the training samples, called a map, and is, therefore, a method to do dimensionality reduction ( SOM). However, what you see in this plot is a projection in a 2D space of your data, so can be not very accurate, but still can give you an idea of how your clusters are distributed. Using such algorithm, you can plot the data in a 2D plot and then visualize your clusters. K-mean: in this case, you can reduce the dimensionality of your data by using for example PCA. Let me suggest two way to go, using k-means and another clustering algorithm. Obviously, if your data have high dimensional features, as in many cases happen, the visualization is not that easy. In many cases, a good way to proceed is through a visualization of your clusters. The problem is how to assign a semantics to each cluster, and thus measure the "performance" of your algorithm. Nevertheless, much useful information can be extrapolated from these algorithms (e.g. Normally, clustering is considered as an Unsupervised method, thus is difficult to establish a good performance metric (as also suggested in the previous comments). Follow intuition according to dataset and the problem your are trying to solve. Note: Elbow Criterion is heuristic in nature, and may not work for your data set.

According to above elbow in line graph, number of optimal cluster is 3. If the line graph looks like an arm - a red circle in above line graph (like angle), the "elbow" on the arm is the value of optimal k (number of cluster). Plt.plot(list(sse.keys()), list(sse.values())) Sse = kmeans.inertia_ # Inertia: Sum of distances of samples to their closest cluster center Kmeans = KMeans(n_clusters=k, max_iter=1000).fit(data) X = pd.DataFrame(iris.data, columns=iris)ĭata = X] Iris dataset example: import pandas as pd So the goal is to choose a small value of k that still has a low SSE, and the elbow usually represents, where we start to have diminishing returns by increasing k. of data points in the dataset, because then each data point is its own cluster, and there is no error between it and the center of its cluster). SSE tends to decrease toward 0 as we increase k (SSE=0, when k is equal to the no.

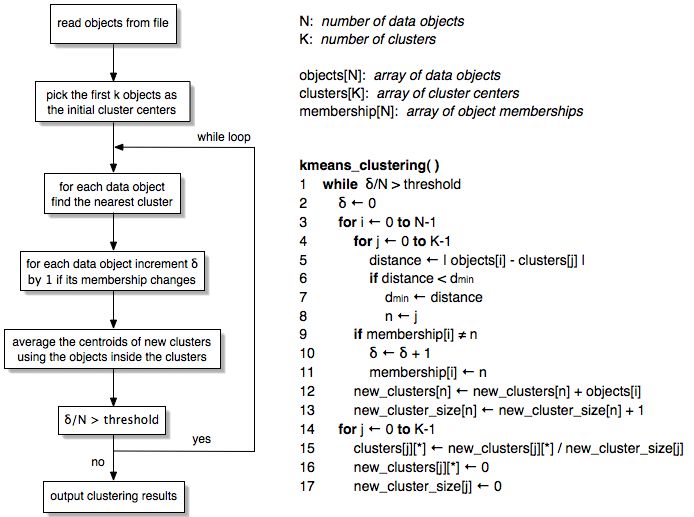

The SSE is defined as the sum of the squared distance between each member of the cluster and its centroid.Ĭalculate Sum of Squared Error(SSE) for each value of k, where k is no. The idea of the Elbow Criterion method is to choose the k(no of cluster) at which the SSE decreases abruptly. We need to calculate SSE to evaluate K-Means clustering using Elbow Criterion. It is not available as a function/method in Scikit-Learn. My question is : does any clustering internal evaluation already exist in Scikit Learn (except from silhouette_score) ? Or in another well known library ?Īpart from Silhouette Score, Elbow Criterion can be used to evaluate K-Mean clustering. Now that I have my KMeans and my three clusters stored, I'm trying to use the Dunn Index to measure the performance of my clustering (we seek the greater index)įor that purpose I import the jqm_cvi package (available here) from jqmcvi import base Prediction = pd.concat(, axis = 1)Ĭlus0 = prediction.locĬlus1 = prediction.locĬlus2 = prediction.loc #We store the K-means results in a dataframe I'm not an expert but I am eager to learn more about clustering. I'm trying to do a clustering with K-means method but I would like to measure the performance of my clustering.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed